MemoryMaker

In BetaRAG application using vector embeddings for intelligent knowledge retrieval and search.

Date

2024-04

Duration

6 weeks

Team

solo

Difficulty

hard

Project Story

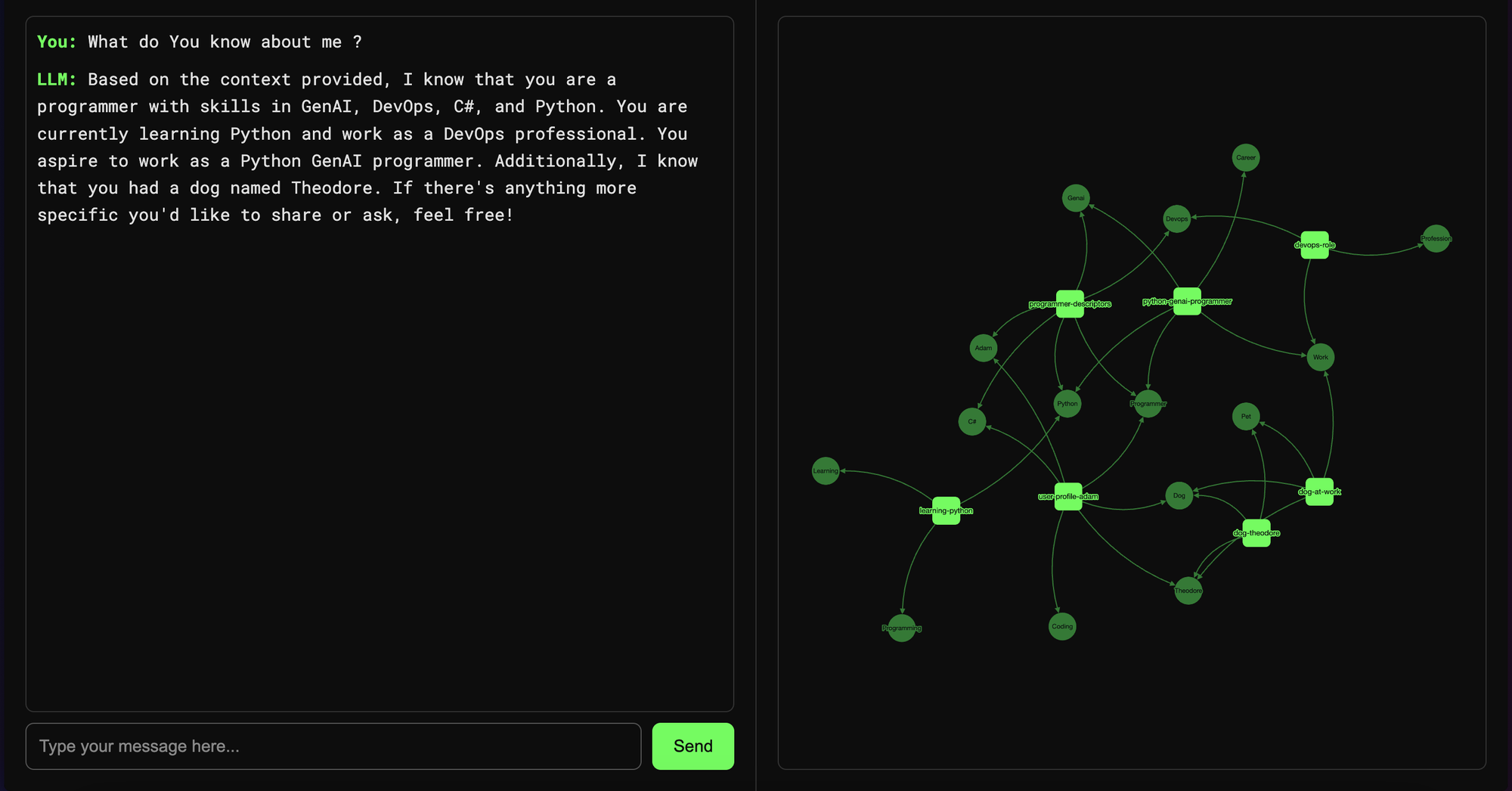

MemoryMaker explores Retrieval-Augmented Generation by turning private documents into a searchable knowledge base.

OpenAI embeddings and FAISS provide the retrieval layer, while prompt orchestration improves answer grounding and relevance.

Technical Details

Tech Stack

PythonOpenAI EmbeddingsFAISSVector SearchRAG

Key Features

Document indexing pipeline

Semantic retrieval

Natural-language querying

Context-aware answers

Batch processing flow

Challenges Faced

Vector index lifecycle management

Retrieval quality tuning

Document preprocessing complexity

Compute budget constraints

Key Learnings

Chunking strategy strongly affects retrieval quality

RAG improves trust for private-domain answers

FAISS gives strong performance-to-complexity ratio

Evaluation loops are needed for relevance tuning

Explore More Artificial Intelligence Projects

Need a similar implementation?

If you want to build a practical AI feature like this in your product, reach out and I can help with architecture, prototyping, and delivery.